On Being a Good .NET Developer

While reading Rob Ashton’s thought-provoking piece titled “Why you can’t be a good .NET developer” over my morning cappuccino the other day, for the first few paragraphs I found myself nodding in agreement.

Having been a consultant for the past fifteen years, I’ve certainly come across more than a few teams where the “lowest common denominator” was without a doubt the driving force behind every decision. This isn’t in any way unique to .NET, though. I have seen the exact same thing happen in other platforms as well: Java, JavaScript and — to some degree — even C, C++1.

What they all have in common is a humongous active user base.

You see, it’s simply a matter of statistics: the more popular the platform2, the higher the number of beginners. The two variables are directly proportional to each other — some might argue even exponential. If you’re looking for a concrete example, consider the amount of novice JavaScript developers brought in by the popularity of jQuery.

The problem is not that .NET has an unusually high number of “lowest common denominators”. That number is simply higher compared to platforms with a narrower, mostly self-selected, audience.

The problem — and this is where I disagree with the underlying message in that article — is failing a platform based on the number of inexperienced programmers who work with it.

I also don’t think that fleeing is the right way to handle the situation. I don’t know about you, but I like to apply the Boy Scout Rule in more than just code; when I join a team, I want to leave it in better shape than I found it. This means that if I join a team who is dominated by inexperienced programmers, I don’t see it as an excuse to hold back on quality. Quite the opposite, I feel compelled to introduce the team to new ways of doing things, new perspectives. Note that I don’t force anything on anyone; instead, I try to lead by example.

For instance, if I see that the team is stuck using TFS, I will still use Git on my machine and add a bridge like git-tfs to collaborate. Sooner or later, without mistake, someone is going to wonder why I do that. Driven by curiosity, they’ll ask me to explain how Git is better than TFS and I’ll be more than happy to tell them all about it. After a while, that same person — or someone else on the team — is going to start using Git on their own machine and, soon enough, the entire team will be sitting in a console firing Git commands like there’s no tomorrow, wondering why they hadn’t learned it earlier.

I never compromise on excellence. It’s just that with some teams, the way to get there is longer than with others.

To me the solution isn’t to run away from beginners. It’s to inspire and mentor them so that they won’t stay beginners forever and instead go on to do the same for other people. That applies as much to .NET as it does to any other platform or language.

If you aren’t the type of person who has the time or the interest to raise the lowest common denominator, that’s perfectly fine. I do believe you’re better off moving somewhere else where your ambitions aren’t being held back by inexperienced team members. As for myself, I’ll stay behind — teaching.

-

C and C++ have a steep learning curve which forces programmers to move past the beginner stage far more quickly than with other languages in order to get anything done. So, while C and C++ are immensely widespread, the number of novices who work with them tends to stay relatively low. ↩

-

Just to be clear, by “platform” I mean a programming language together with its ecosystem of libraries, frameworks and tools. ↩

The importance of a good-looking history

“Study the past if you would define the future.” ~Confucius

Since the dawn of civilization, common sense has taught us that the way forward starts by knowing how we got here in the first place. While this powerful principle applies to practically all aspects of life, it’s especially true when developing software.

For us programmers, the rear mirror through which we look at the history of a code base before we go on to shape its future is version control. Among all the information captured by a version control tool, the most critical ones are the commit messages.

Git’s view of history

When we’re trying to understand how a piece of software has evolved over time, the first thing we tend to do is look at the trails of messages left by the programmers who came before us. Those sentences hold the key to understanding the choices that molded the software into what it is today.

In other words, what you write in these messages is crucial and you should put extra effort in making them as loud and clear as possible.

This is true regardless of what version control system you happen to be using. However, it is especially true for Git. Why? Because Git simply holds the history of your code to a higher standard.

As Linus Torvalds explained in his excellent Teck Talk at Google back in 2007, Git evolved out of the need to manage the development of the Linux kernel, a humongous open source project with a 20 year history and hundreds of contributors from all around the world.

If source code history has ever played a more critical role in a software project, the Linux kernel is where it’s at.

Torvalds’ attention to history is also reflected in the features he built into his own distributed version control tool. To put it in his own words:

I want clean history, but that really means (a) clean and (b) history.

Regarding the “clean” part, he goes on to elaborate:

Keep your own history readable.

Some people do this by just working things out in their head first, and not making mistakes. But that’s very rare, and for the rest of us, we use “git rebase” etc. while we work on our problems.

Don’t expose your crap.

When it comes to “history”, he says:

People can (and probably should) rebase their private trees (their own work). That’s a cleanup. But never other people’s code. That’s a “destroy history”

You see, Git grants you all the tools you need to go back in time and rewrite your own commits (for example by changing their order, contents and messages) because having a clear history of the code matters. It matters to the sanity of whoever is working on it; present or future.

A legacy of e-mails

Having talked about the importance of keeping your history clean, let’s take the concept one step further.

When you use Git, you should not only pay attention to the contents of your commit messages, but also how they're formatted.

There’s a reason for that. As Torvalds himself stated in his Google talk, for a long period of time the history of the Linux kernel was captured in e-mail threads with patches attached:

“For the first 10 years of kernel maintenance we literally used tarballs and patches.” ~Linus Torvalds

Even in the early days of Git, e-mail was still used as a way to send patches among collaborators of the Linux project.

If you look closely, you’ll notice that the concept of e-mail is pretty pervasive throughout Git. Here’s some evidence off the top of my head:

- Every user has to have an e-mail address which is always part of the commit’s metadata

- The

git format-patchandgit amcommands are specifically designed to convert commits into e-mails with patches as attachments - Both

git blameandgit shortloghave special options to display the committers’ e-mail addresses instead of their names - The

git logcommand has dedicated placeholders to indicate a commit message’s subject and body

The last one is particularly interesting. Git seems to assume that a commit message is divided in two parts:

- A short one-sentence summary

- An optional longer description defined in its own paragraph separated by an empty line

A “well-formed” Git commit message would then look like this:

A short summary, possibly under 50 characters.

A longer description of the change and the reasoning

behind it for the future generations to know.

Even better if it's wrapped at 80 characters so that

it will look good in the console.

If you follow this simple convention, Git will reward you by going out of its way to show you your history in the prettiest way possible. And that’s a good thing.

Formatting matters

Once you fall into the habit of keeping your commit messages under 50 characters and relegate any longer description to a separate paragraph, you can start pretty-printing your history in almost any way you like.

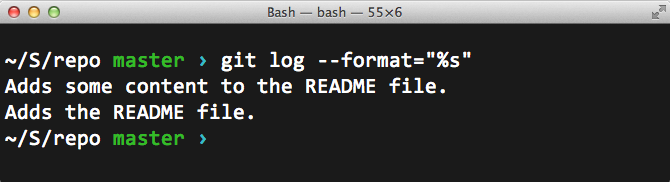

For example, you could choose to only display the commits’ summaries by using the %s placeholder in the --format option of git log:

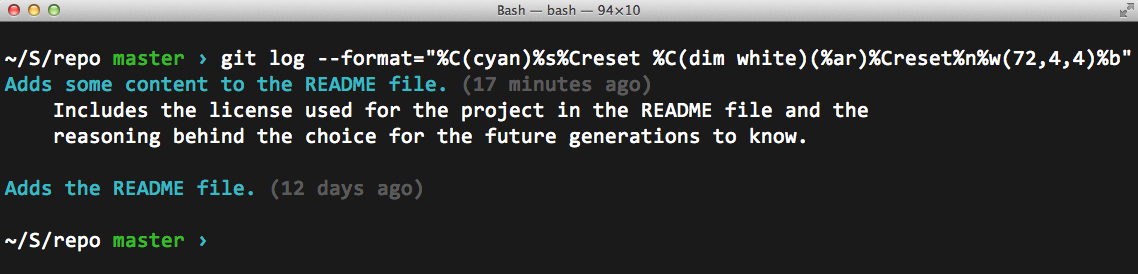

Or you could go crazy with all kinds of colors and indentation:

The format string I used in this particular example can be broken down as:

"%C(cyan)%s%Creset %C(dim white)(%ar)%Creset%n%w(72,4,4)%b"

where:

%C(cyan)colors the following text in cyan%sshows the commit summary%Cresetrestores the default color for the text%C(dim white)colors the following text in grey%arshows the time of the commit relative to now%nadds a newline character%w(72,4,4)wraps the following text at 72 characters. Then, indents the first line as well as the remaining ones with 4 spaces%bshows the long description of the commit, if any

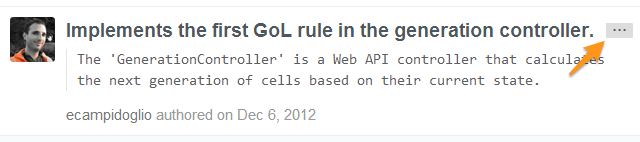

GitHub itself follows this convention when showing the commit history of a project. In fact, they will only show you the summary of each commit by default. If there’s a longer description available, they allow you to expand it with the press of a button.

Pretty-printed commit message on GitHub

Pretty-printed commit message on GitHub

Enforcing the rule

Of course, this all works best if everyone on the project agrees to follow the convention.

But how do you ensure that the team sticks to the golden rule of pretty commits™?

Well, you give your peers a gentle nudge at exactly the right moment: just when they’re about to make a commit. This is what Jeff Atwood calls the “Just In Time” theory:

You do it by showing them:

- the minimum helpful reminder

- at exactly the right time

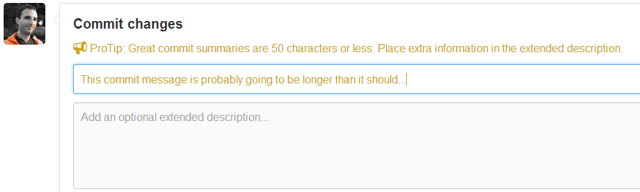

GitHub does this already, both on the Web:

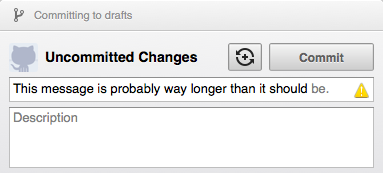

Commit message being validated in the GitHub web UI

Commit message being validated in the GitHub web UI

and in its desktop clients:

Commit message being validated in GitHub for Mac...

Commit message being validated in GitHub for Mac...

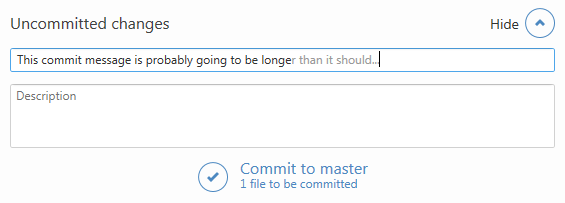

...and in GitHub for Windows

...and in GitHub for Windows

But what if you prefer to use Git from the command line, the way it should be?

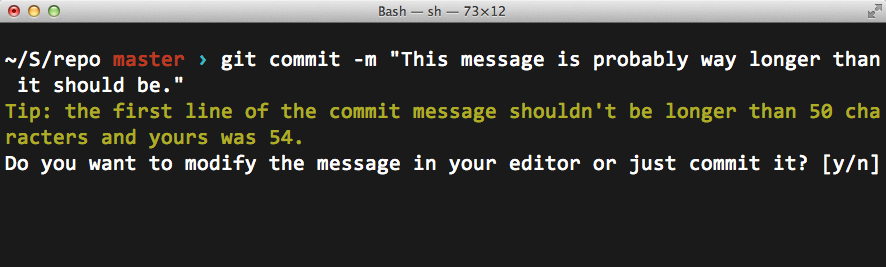

Easy. You write a shell script that gets triggered by Git’s client side hooks every time you’re about to do a commit. In that script, you make sure the message is formatted according to the rules.

Here’s my version of it:

#!/bin/sh

#

# A hook script that checks the length of the commit message.

#

# Called by "git commit" with one argument, the name of the file

# that has the commit message. The hook should exit with non-zero

# status after issuing an appropriate message if it wants to stop the

# commit. The hook is allowed to edit the commit message file.

DEFAULT="\033[0m"

YELLOW="\033[1;33m"

function printWarning {

message=$1

printf >&2 "${YELLOW}$message${DEFAULT}\n"

}

function printNewline {

printf "\n"

}

function captureUserInput {

# Assigns stdin to the keyboard

exec < /dev/tty

}

function confirm {

question=$1

read -p "$question [y/n]"$'\n' -n 1 -r

}

messageFilePath=$1

message=$(cat $messageFilePath)

firstLine=$(printf "$message" | sed -n 1p)

firstLineLength=$(printf ${#firstLine})

test $firstLineLength -lt 51 || {

printWarning "Tip: the first line of the commit message shouldn't be longer than 50 characters and yours was $firstLineLength."

captureUserInput

confirm "Do you want to modify the message in your editor or just commit it?"

if [[ $REPLY =~ ^[Yy]$ ]]; then

$EDITOR $messageFilePath

fi

printNewline

exit 0

}

In order to use it in your local repo, you’ll have to manually copy the script file into the .git\hooks directory and call it commit-msg. Finally, you’ll have grant execute rights to the file in order to make it runnable:

cp commit-msg somerepo/.git/hooks

chmod +x somerepo/.git/hooks/commit-msg

From that point forward, every time you attempt to create a commit that doesn’t follow the rules you’ll get a chance to do the right thing:

If you choose to press y, the commit message will open up in your default text editor from which you can rewrite it properly. Pressing n, on the other hand, will override the rule altogether and commit the message as it is.

Not that you’d ever want to do that.

Leveraging the cloud for fun and games

Friday night, February 21, 2014. That’s when the tretton37 Counter-Strike: Global Offensive fragfest was bound to start. Avid gamers looking to share virtual blood together, were eager to join in from our offices in Lund and Stockholm. A few more would play over the Internet.

The time and place were set. Pizzas were ordered. Everything was ready to go. Except for one thing:

We didn’t have a dedicated Counter-Strike server to host the game on.

Finding a spare machine to dedicate for that one night wasn’t an easy task, given our requirements:

- The host should be reachable from the Internet

- The machine should have enough hardware to handle a CS:GO game with 15+ players

- The machine should be able to scale up as more players join the game

- The whole thing should be a breeze to setup

For days I pondered my options when, suddenly, it hit me:

Where's the place to find commodity hardware that's available for rent, is on the Internet and can scale at will?

The cloud, of course! This realization fell on my head like the proverbial apple from the tree.

Step 1: Getting a machine in the cloud

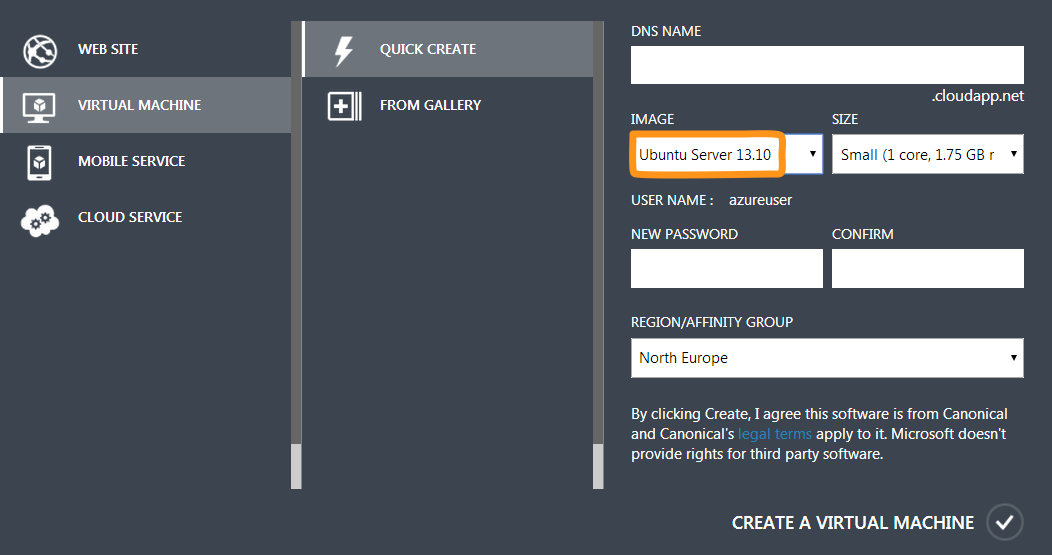

Valve puts out their Source Dedicated Server software both for Windows and Linux. The Windows version has a GUI and is generally what you’d call “user friendly”. The Linux version, on the other hand, is lean & mean and is managed entirely from the command line. Programmers being programmers, I decided to go for the Linux version.

Now, having established that I needed a Linux box, the next question was: which of the available clouds was I going to entrust with our gaming night? Since tretton37 is mainly a Microsoft shop, it felt natural to go for Microsoft Azure. However, I wasn’t holding any high hopes that they would allow me to install Linux on one of their virtual machines.

As it turned out, I had to eat my hat on that one. Azure does, in fact, offer pre-installed Linux virtual machines ready to go. To me, this is proof that the cloud division at Microsoft totally gets how things are supposed to work in the 21st century. Kudos to them.

After literally 2 minutes, I had an Ubuntu Server machine with root access via SSH running in the cloud.

If I hadn’t already eaten my hat, I would take it off for Azure.

Step 2: Installing the Steam Console Client

Hosting a CS:GO server implies setting up a so called Source Dedicated Server, also known as SRCDS. That’s Valve’s server software used to run all their games that are based on the Source Engine. The list includes Half Life 2, Team Fortress, Counter-Strike and so on.

A SRCDS is easily installed through the Steam Console Client, or SteamCMD. The easiest way to get it on Linux, is to download it and unpack it from a tarball. But first things first.

It’s probably a good idea to run the Source server with a dedicated user account that doesn’t have root privileges. So I went ahead and created a steam user, switched to it and headed to its home directory:

adduser steam

su steam

cd ~

Next, I needed to install a few libraries that SteamCMD depends on, like the GNU C compiler and its friends. That’s where I hit the first roadblock.

steamcmd: error while loading shared libraries: libstdc++.so.6: cannot open shared object file: No such file or directory

Uh? A quick search on the Internet revealed that SteamCMD doesn’t like to run on a 64-bit OS. In fact:

SteamCMD is a 32-bit binary, so it needs 32-bit libraries.

On the other hand:

The prepackaged Linux VMs available in Azure come in 64 bit only.

Ouch. Luckily, the issue was easily solved by installing the right version of Libgcc:

apt-get install lib32gcc1

Finally, I was ready to download the SteamCMD binaries and unpack them:

wget http://media.steampowered.com/installer/steamcmd_linux.tar.gz

tar xzvf steamcmd_linux.tar.gz

The client itself was kicked off by a Bash script:

cd ./steamcmd

./steamcmd.sh

That brought down the necessary updates to the client tools and started an interactive prompt from where I could install any of Valve’s Source games servers.

At this point, I could have continued down the same route and install the CS:GO Dedicated Server (CSGO DS) by using SteamCMD.

However, a few intricate problems would be waiting further down the road. So, I decided to back out and find a better solution.

Steam> quit

Step 3: Installing the CS:GO Dedicated Server

Remember that thing about SteamCMD being a 32-bit binary and the Linux VM on Azure being only available in 64-bit?

Well, that turned out to be a bigger issue than I thought. Even after having successfully installed the CS:GO server, getting it to run became a nightmare. The server was constantly complaining about the wrong version of some obscure libraries. Files and directories were missing. Everything was a mess.

Salvation came in the form of a meticulously crafted script, designed to take care of those nitty-gritty details for me.

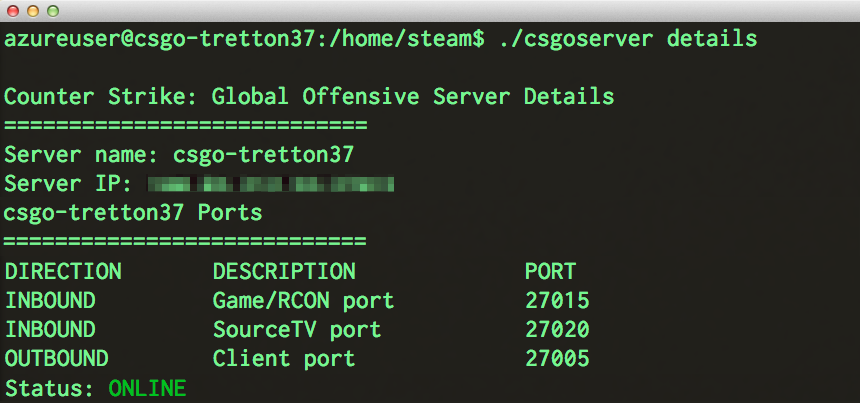

Thanks to Daniel Gibbs' hard work, I could use his fabulous csgoserver script to install, configure and, above all, manage our CS:GO Dedicated Server without pain.

You can find a detailed description how to use the csgoserver up on his site, so I’m just gonna report how I configured it to suit our deathmatch needs.

Step 4: Configuration

The CS:GO server can be configured in a few different ways and it’s all done in the server.cfg configuration file. In it, you can set up things like the game mode (Arms Race, Classic, Competitive to name a few) the maximum number of players and so on.

Here’s how I configured it for the tretton37 deathmatch:

sv_password "secret" # Requires a password to join the server

sv_cheats 0 # Disables hacks and cheat codes

sv_lan 0 # Disables LAN mode

Step 5: Gold plating

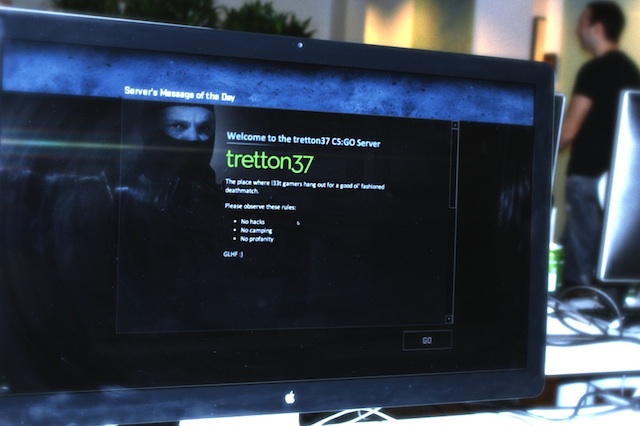

The final touch was to provide an appropriate Message of the Day (or MOTD) for the occasion. That would be the screen that greets the players as they join the game, setting the right tone.

Once again, the whole thing was done by simply editing a text file. In this case, the file contained some HTML markup and a few stylesheets and was located in /home/steam/csgo/motd.txt.

Here’s how it looked like in action:

Step 6: Deathmatch!

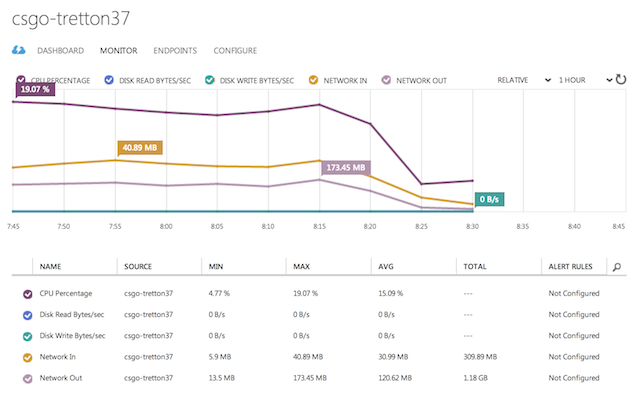

This article is primarily meant as a reference on how to configure a dedicated CS:GO server on a Linux box hosted on Microsoft Azure. Nonetheless, I figured it would be interesting to follow up with some information on how the server itself held up during that glorious game night.

Here’s a few stats taken both from the Azure Dashboard as well as from the operating system itself. Note that the server was running on a Large VM sporting a quad core 1.6 GHz CPU and 7 GB of RAM:

- Number of simultaneous players: 16

- Average CPU load: 15 %

- Memory usage: 2.8 GB

- Total outbound network traffic served: 1.18 GB

In retrospect, that configuration was probably a little overkill for the job. A Medium VM with a dual 1.6 GHz CPU and 3.5 GB of RAM would have probably sufficed. But hey, elastic scaling is exactly what the cloud is for.

One final thought

Oddly enough, this experience opened up my eyes to the great potential of cloud computing.

The CS:GO server was only intended to run for the duration of the event, which would last for a few hours. During that short period of time, I needed it to be as fast and responsive as possible. Hence, I went all out on the hardware.

As soon as the game night was over, I immediately shut down the virtual machine. The total cost for borrowing that awesome hardware for a few hours? Literally peanuts.

Amazing.